Azure Cloud native Azure Route Server is designed to simplify dynamic routing between network virtual appliances […]

Cisco bought Isovalent. Isovalent developed a product called “Cillium”. Cillium uses a technology called eBPF. What […]

I attended Kubecon 2024 last week in Salt Lake City. It was a great show, with […]

Objective The objective of this scenario is to guide you through the steps necessary to extend […]

Google Cloud Platform (GCP) offers various networking services, each with its own set of limitations and […]

As network architects, we know that there are pros and cons in designing any network in […]

Scenario ABC Healthcare (a fictitious company), a leading healthcare provider, operates solely within the AWS cloud […]

Introduction Zero Trust is a security framework. It is not a product. It is a mindset […]

AWS Direct Connect (DX) is a networking service that provides private network connections between customer facilities […]

AWS Control Tower automates the setup of a new AWS Landing Zone using best-practices blueprints for […]

An AWS landing zone is a well-architected, multi-account AWS environment based on security and compliance best […]

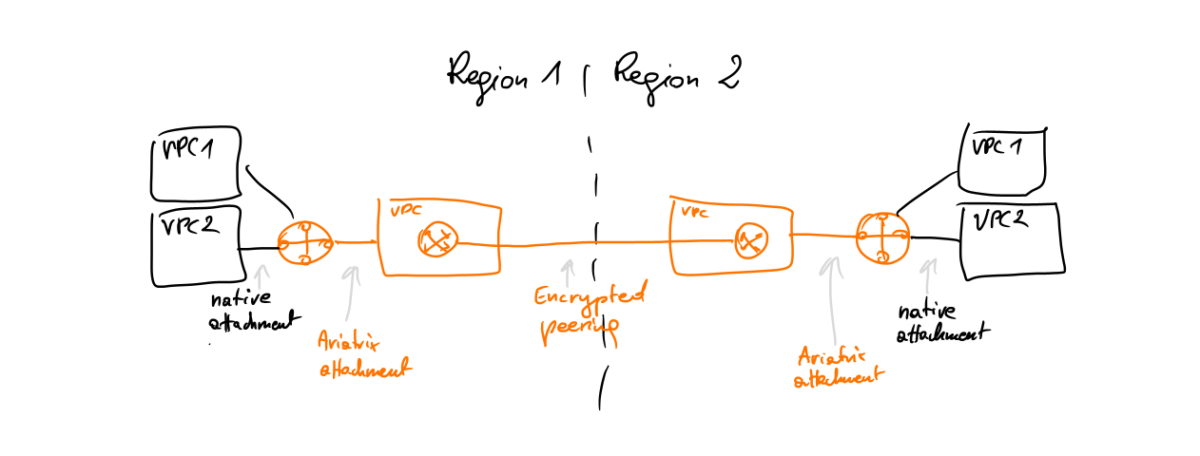

AWS CloudWAN New Terminologies AWS CloudWAN = Global Construct = Control Plane only CNE = Core […]

Following are considered to be core and important AWS services related to networking AWS Network PeeringAWS […]

In this section, we discuss the essential AWS Services. We either require these services to operate, […]

This is a series of posts discussing AWS Networking Services Introduction This document aims to provide […]

As networking professionals, we must stay informed about the latest advancements in GenAI. To help my […]

Let’s distinguish between Large Language Models (LLMs) and Deep Learning Recommendation Models (DLRMs). Large Language Models […]

When you read the title, a few things come to mind. Q: “What does it actually […]

Q: How do neural networks learn from data? Please describe the main process or methodology used? […]

NETFLOW V9 gives you both options to use. Either IPT (IP Network Traffic) or L7 (Layer7 […]

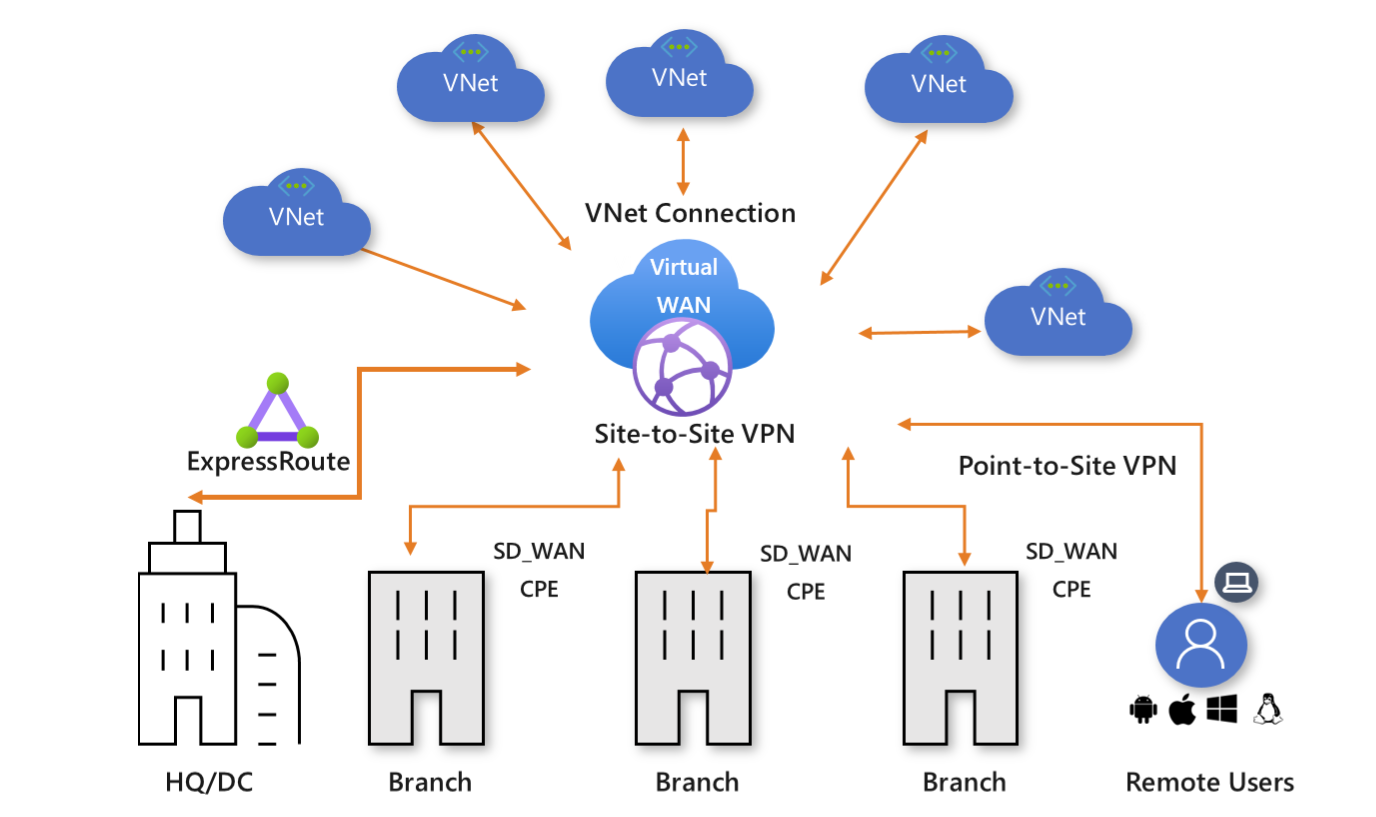

Azure Virtual WAN is a networking service that combines many networking, security, and routing functionalities to […]

Recently, I attended the RSA Conference 2024, a premier gathering showcasing the most innovative cybersecurity advancements. […]

I thought the private cloud definition was well established, but recently, an eLearning course caused some […]

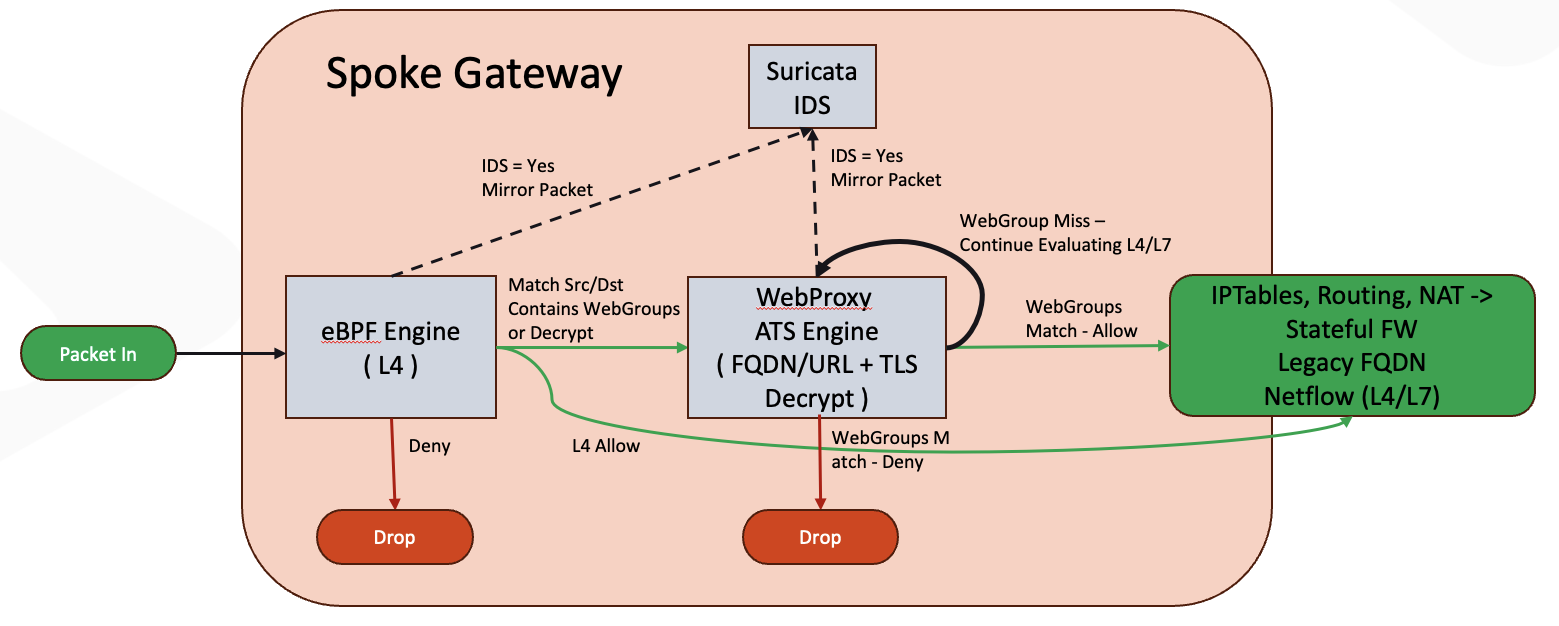

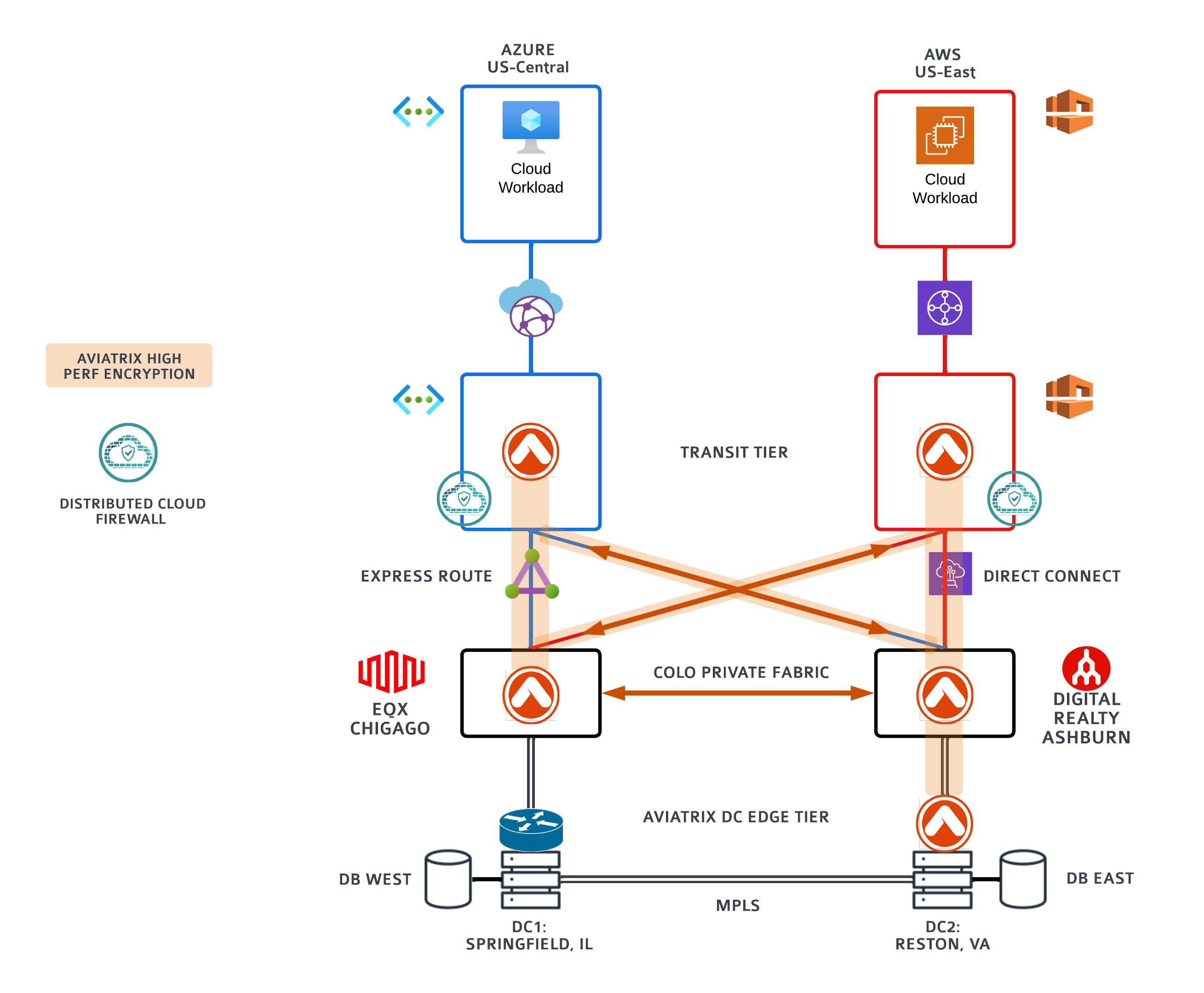

Title: Aviatrix’s Distributed Cloud Firewall: Harnessing open source technologies for enhanced security Introduction: The need for […]

In my perspective, this deal holds significant promise, as I elucidated in my detailed article. One […]

It is time for network admins, engineers, architects, and technology leaders to adopt a clear path […]

Enterprise enablement and technical pre-sales are challenging job functions in any organization. A typical instructor, engineer, […]

You must have heard that CSP native networking and security services provided by CSPs (Cloud Service […]

This blog covers how AWS and AViatrix coming teogher reduce AWS NAT Gateway Cost with Improved […]

What is AWS Transit Gateway (AWS-TGW)? AWS Transit Gateway is a service that allows customers to […]

A cloud network backbone, also known as a cloud backbone, is a high-speed network infrastructure that […]

This is a five-part series of articles examining five critical mistakes organizations face when building a […]

AKS is Azure Kubernetes Service. It is a K8S service managed by Azure. https://learn.microsoft.com/en-us/azure/aks/configure-azure-cni#frequently-asked-questions Q: What […]